Hello trace-masters!

I am back with my last assignment, in which I have implemented:

- Object Lights

- Mesh lights

- Spherical (also ellipsoid) lights

- Spherical environment lights

- Path Tracing

In this post, we will examine those topics in detail.

Note: All the scenes in this assignment are HDR scenes. Thanks to my perfectly implemented (!) tonemapping procedure, some of my tonemapped .PNG files render dark, and some render too enlightened images at the end of the raytracing operation; though all my .exr renders have correct colors. EXR Outputs section at the end of this post provides links to visualize my .exr renders.

Mesh and Spherical Lights

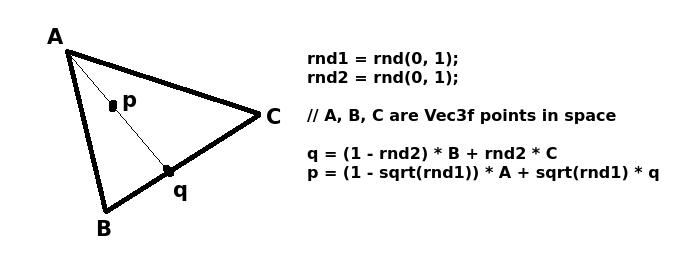

As you know, meshes are composed of triangles. When a mesh is defined as a light, we first randomly choose a triangle on the mesh by weighting their chance to be chosen with their areas. Then, we choose a point p inside the chosen triangle uniformly, by applying the formulas below, where p is provided to the rest of the raytracer code as the position of that mesh light:

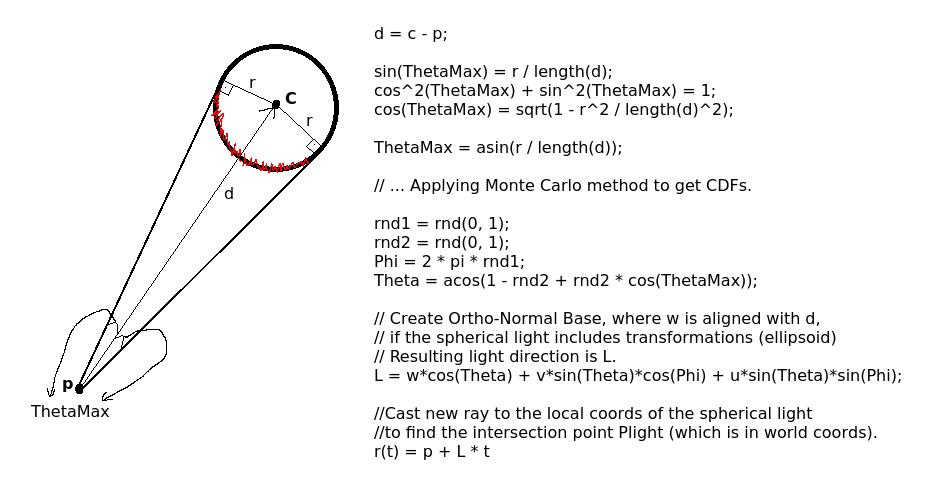

Spherical lights require special treatment. We need to uniformly sample a point Plight on the visible surface of the spherical light, so that we can declare the light direction and distance from the point p our ray hit in the scene. The image below explains the procedure in detail:

Below are my mesh and spherical light outputs:

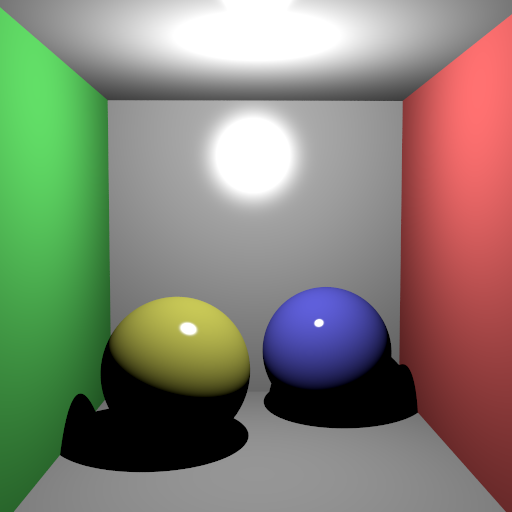

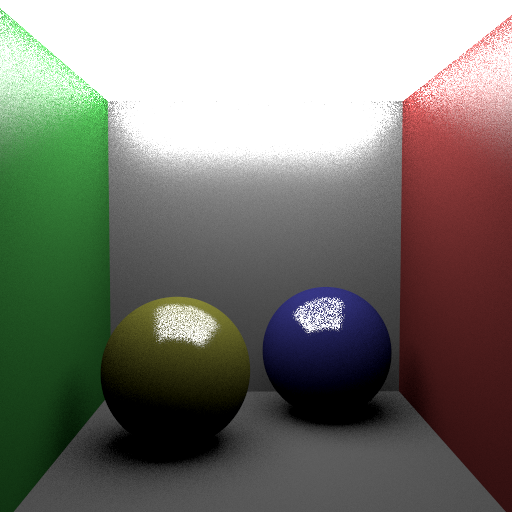

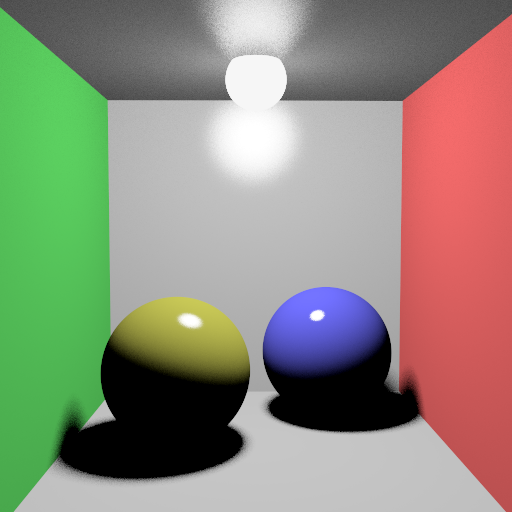

cornellbox_jaroslav_diffuse.xml (512x512) /w 8 thrd, 36 MSAA A point light on the ceiling (50 Watts).

cornellbox_jaroslav_diffuse_area.xml (512x512) /w 8 thrd, 100 MSAA Ceiling is 2-triangle mesh light.

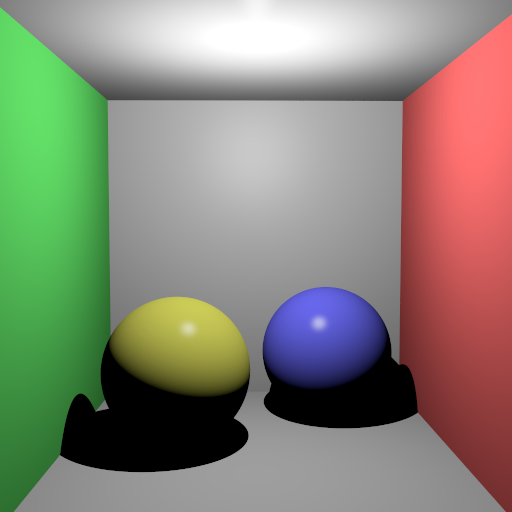

cornellbox_jaroslav_glossy.xml (512x512) /w 8 thrd, 36 MSAA A point light on the ceiling (50 Watts).

cornellbox_jaroslav_glossy_area.xml (512x512) /w 8 thrd, 100 MSAA Ceiling is 2-triangle mesh light.

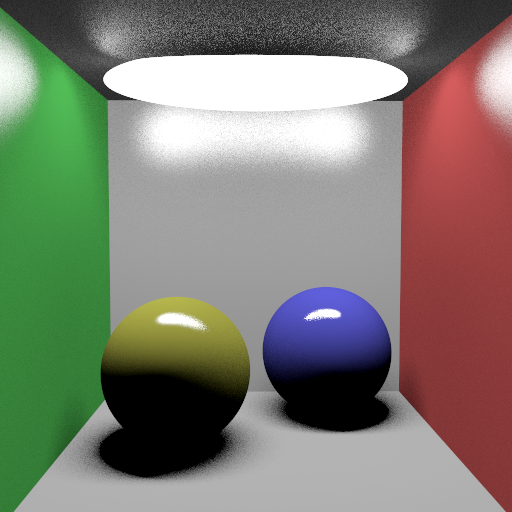

cornellbox_jaroslav_glossy_area_ellipsoid.xml (512x512) /w 8 thrd, 100 MSAA A (5, 1, 1)-scaled sphere (ellipsoid) light on the ceiling.

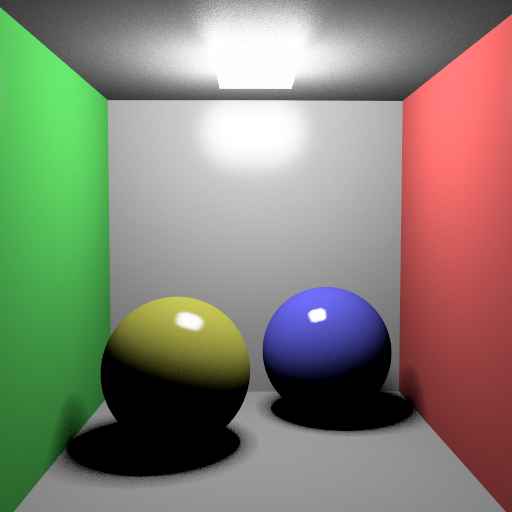

cornellbox_jaroslav_glossy_area_small.xml (512x512) /w 8 thrd, 100 MSAA A cube mesh light on the ceiling.

cornellbox_jaroslav_glossy_area_sphere.xml (512x512) /w 8 thrd, 100 MSAA A non-transformed sphere light on the ceiling.

cornellbox_jaroslav_nobrdf.xml (512x512) /w 8 thrd, 36 MSAA A no-BRDF scene with point light on the ceiling.

Spherical Environment Lights

In this light type, an infinitely large sphere is assumed to envelope all the scene. Every element of the scene (including the camera) is inside that giant sphere (an abstract sphere, no real object). An HDR latitude-longitute texture is mapped to that giant sphere, and it operates as follows:

- If a ray (whose direction is unit L) does not hit any object in the scene, calculate u and v texture coordinates by using Theta and Phi angles, which are found as:

- Theta = acos(L.y);

- Phi = atan2(L.z, L.x);

- u = (-Phi + pi) / (2*pi);

- v = Theta / pi;

- If a ray hits an object in the scene, create an ONB on that hit point which is oriented in the direction of the normal vector of the hit point, and choose a uniform random direction L. Cast a new ray using L as ray direction. If the newly cast ray does not hit any object, use the method above.

- Using the u and v coordinates, fetch color from the texture image of the spherical environment light (giant enveloping abstract sphere) and use that value as the light radiance.

This light type grants us the ability of blending surrounding environmental color into the surfaces of the objects inside the scene.

Spherical environment light renders are provided below, see that the left side of the face mesh blends yellowish color due to the nearby yellow wall texture:

head_env_light.xml (1600x900) /w 8 thrd, 900 MSAA Darkness due to a bug in my tonemapping implementation. Below is a lightened version of the same .png (using Shotwell application) Also, .exr render of this scene can be checked out to see my actual (correct) HDR (.exr) output.

head_env_light.xml (1600x900) /w 8 thrd, 900 MSAA 22 minutes 17 secs to render.

Bonus Scene

Below is a basic scene (levitating_dragon.xml) that I have created by editing the head_env_light.xml scene file (the scene file is available as a 50.6 MB .zip file here).

I have removed the head mesh, and then added 1 mirror sphere and 1 reddish-glass dragon with some basic tranformations. The lightning is still spherical environment light with the same enveloping HDR texture:

levitating_dragon.xml (1600x900) /w 8 thrd, 4 MSAA, 5 MaxRecursionDepth, Mirror sphere (0.9, 0.9, 0.9) reflectance, Dragon mesh (0.99, 0, 0) transparency and 2.0 refractive index. See the yellowish color reflectance on the back of the dragon.

levitating_dragon.xml (1920x1080) /w 8 thrd, 100 MSAA, 4 MaxRecursionDepth, Sphere was placed a bit far away in this one.

Path Tracing

In this assignment, we calculate both the direct and indirect lightning and use them together to illuminate the pixels if path tracing is used on the scene. By doing so, we aim to get a nice illumination in the path traced scenes. So, we first run the direct illumination computation (diffuse, specular, reflectance etc.), and then we create a ONB at the hit point, oriented towards the hit point normal; then create and send a random ray (using uniform or importance sampling) into the scene from the hit point, and sum the illumination contribution of that ray chain (a path of rays is created until MaxRecursionDepth) to the total color of the pixel we’re currently working on.

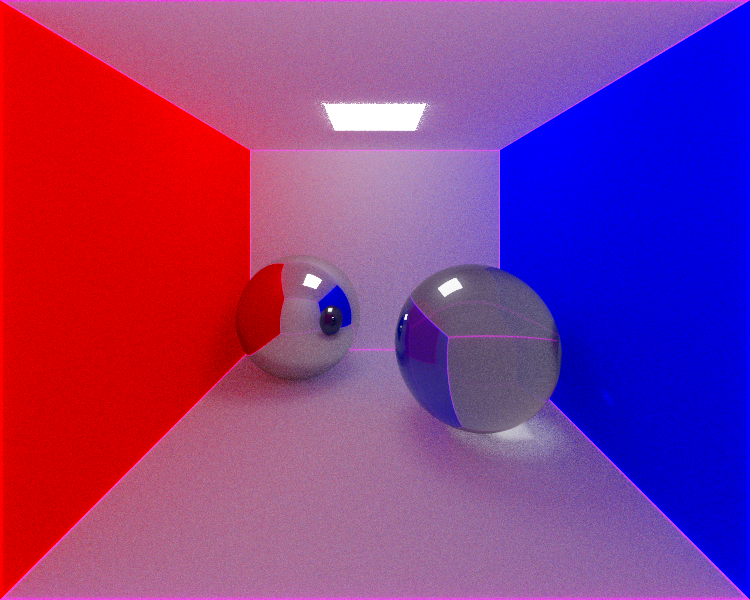

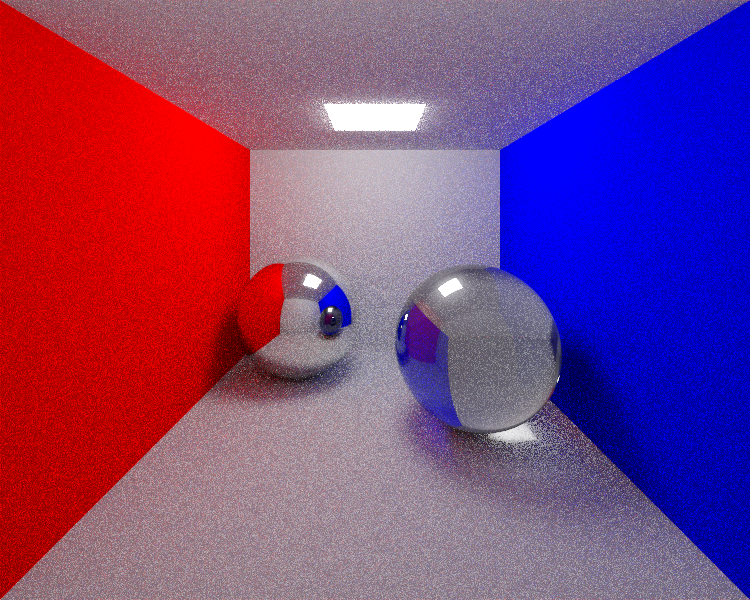

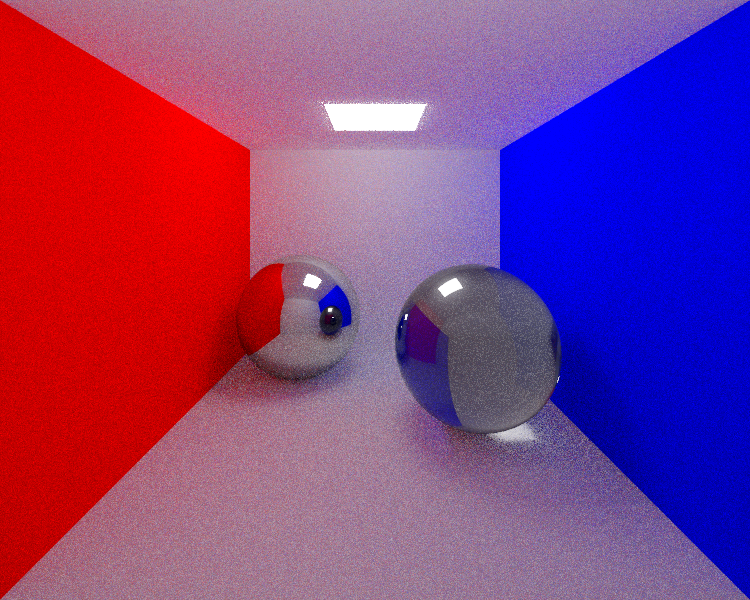

Below are my outputs. Check the caustic effect below the glass sphere (which is a natural result of path tracing, and an effect that almost makes me shed tears of joy); and the color blend of the side walls onto the ceiling, floor, and the back wall:

cornellbox_path_uniform_100.xml (750x600) /w 8 thrd, 100 MSAA Path tracing, Uniform sampling. Caustic effect is formed by randomly cast rays from the point of the caustic effect towards the glass sphere, which at the end hit the area light on the ceiling.

cornellbox_path_importance_100.xml (750x600) /w 8 thrd, 100 MSAA Path tracing, Importance sampling. Results in a bit denoised image than uniform sampling.

Sponza Scene

As I thought the sponza scene is a scene that is provided by our instructor outside the context of this assignment, I have had postponed the rendering of it after I complete this last assignment and also the project.

I will implement the parsing of the sponza scene file, and render it. As the rendering time of this scene is high, I will be able to add my outputs here in a few days.

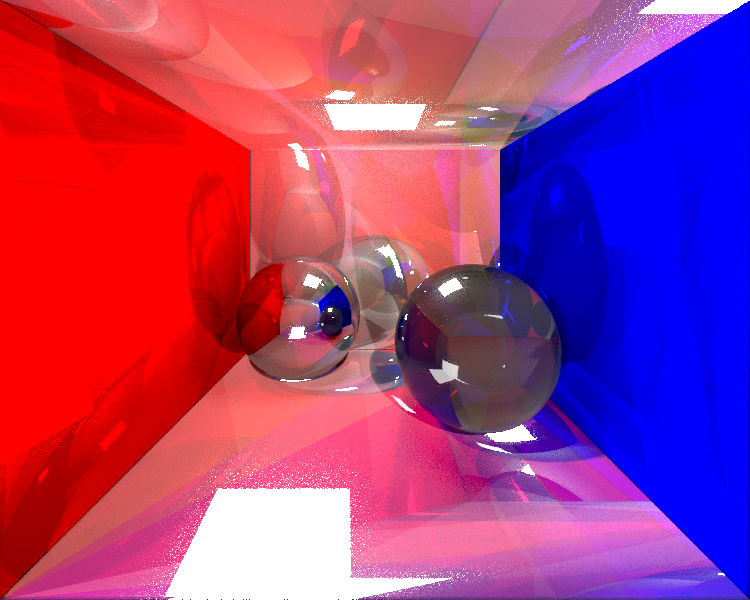

Weird Renders

During the implementation phase of the assignment, I have (let’s say accidentally 🙂 ) rendered some crazy images:

EXR Outputs

You can visualize my .exr outputs by following the links below (move the handles of the slider to right step by step, while “Gamma 2.0” option is selected):

- cornellbox_jaroslav_diffuse.exr

- cornellbox_jaroslav_diffuse_area.exr

- cornellbox_jaroslav_glossy.exr

- cornellbox_jaroslav_glossy_area.exr

- cornellbox_jaroslav_glossy_area_ellipsoid.exr

- cornellbox_jaroslav_glossy_area_small.exr

- cornellbox_jaroslav_glossy_area_sphere.exr

- cornellbox_jaroslav_nobrdf.exr

- cornellbox_path_importance_100.exr

- cornellbox_path_uniform_100.exr

- head_env_light.exr (too big to visualize on the web browser, download only)

- levitating_dragon.exr (too big to visualize on the web browser, download only)

That’s the end of the seventh assignment!

Hope to see you in the term project post!

Happy tracing!

Credits

The scenes that contain “jaroslav” tag in their names are taken from http://cgg.mff.cuni.cz/~jaroslav/teaching/2015-npgr010/index.html.

Just excellent!

Hocam thank you so much!

Also thank you for all the effort you’ve put into Adv. Ray Tracing course during the semester!